Intelligence at the edge of uncertainty

Watch the accompanying webinar!

Julia hosted a webinar going deeper into the subject of this blog article.

AI hallucinates. So do we.

Neuroscience has known this for years – perception is prediction, memory is reconstruction, confidence is not truth. AI just made it impossible to ignore. This article reframes hallucination not as a scandal, but as the shared condition of all intelligence operating at the edge of the knowable. The opportunity? To embrace ambiguity. To sharpen our empirical thinking and practices. To lead change in a world that was never as certain as we pretended.

Here's a confession: the mandatory AI training I recently completed at work told me something demonstrably false.

It explained that AI hallucinations occur when models "make things up" – as if this were some unique flaw, a bug waiting to be fixed. The training framed hallucination as the opposite of how human intelligence works. We recall; they fabricate. We access truth; they generate plausible fiction.

The more I thought about it, the more this framing struck me as backwards. Not because AI doesn't hallucinate – it absolutely does. But because we do too. Constantly. And this isn't a design flaw in either case. It's how minds – artificial and biological – navigate a world that refuses to hold still long enough to be perfectly known.

The brain as a prediction engine

Neuroscientist Anil Seth has spent years developing what might be the most challenging idea in contemporary consciousness research: that ordinary perception is itself a kind of hallucination – controlled, constrained by sensory feedback, but hallucination nonetheless.

In his work on predictive processing, Seth describes the brain not as a passive receiver downloading reality, but as a prediction engine constantly generating hypotheses about what's out there. Your experience of reading these words isn't a straightforward transmission from page to brain. It's your brain's best guess about what caused the light patterns hitting your retinas – a guess shaped by expectations, context, and prior experience, then checked against incoming sensory signals.

Your brain hallucinates your conscious reality

Provocatively, in his TED talk, Seth argues that "we're all hallucinating all the time" – and when those hallucinations align sufficiently, we call it reality. In fact, this reflects a broader neuroscientific view: perception is not a clean download of facts, but an active construction process in which the brain uses prior expectations to interpret incomplete sensory input.1

This isn't philosophical speculation. It's the emerging consensus in neuroscience: perception is active construction, not passive reception. Your brain doesn't show you the world. It shows you a continuously updated model of the world, refined (but never perfected) by sensory evidence.

Memory is even less reliable

If perception is already interpretive, memory is doubly so.

For decades, we've known that memory isn't a filing cabinet. Research on reconsolidation has demonstrated something more unsettling: when we retrieve a memory, we don't simply replay it. We reconstruct it – and in doing so, we can alter it. The act of remembering opens a window during which the memory can be strengthened, weakened, or modified before being stored again.2

Elizabeth Loftus's pioneering work on the misinformation effect made this concrete. In her classic studies, simply changing the verb in a question – asking whether cars "hit" or "smashed" into each other – reliably altered what witnesses reported seeing. People remembered broken glass that was never there. They incorporated suggestions into their recollections without realising it. And they did so with confidence.3

These aren't malfunctions. They're features of a cognitive system optimised for something other than perfect accuracy: for making rapid decisions, for updating beliefs efficiently, for functioning in a world where waiting for certainty means missing the moment to act.

We fill in the gaps all the time

Think about your last working week. How many times did you confidently interpret a brief Teams message as friendly or hostile based on almost no evidence? How often did you fill in the blanks when someone gave you a partial explanation and you constructed the rest? How many times did you tell yourself a story about why a colleague behaved as they did – a story you then treated as fact?

We do this constantly:

... When we assume we know what someone "really meant" by their cryptic email

... When we remember our own intentions more clearly than the impact of our words on others.

... When we treat a confident answer from a colleague as definitive truth, simply because it was delivered fluently.

... When we replay an argument and somehow always seem more reasonable in the reconstruction than we were in the moment.

In daily life, we rarely expect ourselves or others to be perfectly factual. We expect approximation. We expect interpretation. We expect good-faith meaning-making, not court stenography.

What we rely on isn't infallibility. It's correction – dialogue, feedback, evidence, and sometimes painful reality checks.

What AI hallucination actually reveals

LLMs don't recall information the way we imagine we do. They generate responses by predicting likely patterns based on training data and context. When they "hallucinate," they produce outputs that are plausible given those patterns but happen to be false.

Our new research paper argues that language models hallucinate because standard training and evaluation procedures reward guessing over acknowledging uncertainty.

OpenAI's own research on hallucination is revealing: they've shown that models hallucinate partly because standard training and evaluation reward confident answers over honest uncertainty. It's the multiple-choice exam problem – guessing gives you a chance at points, while admitting you don't know guarantees zero. Models learn to guess because that's what gets rewarded.4

But here's the uncomfortable parallel: isn't that also how we train humans?

Think about meetings where confidence is rewarded and uncertainty is seen as weakness. Think about the career incentives that push people to speak with authority even when they're unsure. Think about how we respond to "I don't know" versus a plausible-sounding answer, even when the former is more honest and the latter is a guess.

AI hallucination isn't a scandal unique to machines. It's a mirror reflecting something we already do – and something our social systems often encourage.

The real issue is not that AI sometimes produces uncertainty-shaped output. The real issue is whether we use it as if it were an oracle.

The fantasy of the infallible machine

There's an older hope embedded in how organisations have approached technology: that if we digitise enough, automate enough, and analyse enough, we'll finally achieve certainty. Predictability. Control. The holy grail of knowing exactly what's true, what comes next, and what to do.

AI does something more confronting than fulfil that hope. It refuses it.

AI mirrors back the actual nature of the world we work in: dynamic, ambiguous, probabilistic and only partially knowable.

That is not a bug. It is reality.

Generative AI surfaces the irreducible uncertainty that's always been there. It makes visible the gap between confident output and grounded truth – a gap we've been quietly ignoring in human cognition for centuries.

The world isn't a puzzle waiting to be solved. It's complex, dynamic, and only partially knowable. AI doesn't create that uncertainty. It exposes it.

Why this matters for how we work – AI emphasizes the value of Agility

This is where the conversation becomes directly relevant for all of us navigating daily life; and in particular, transformation, change, or adaptive ways of working.

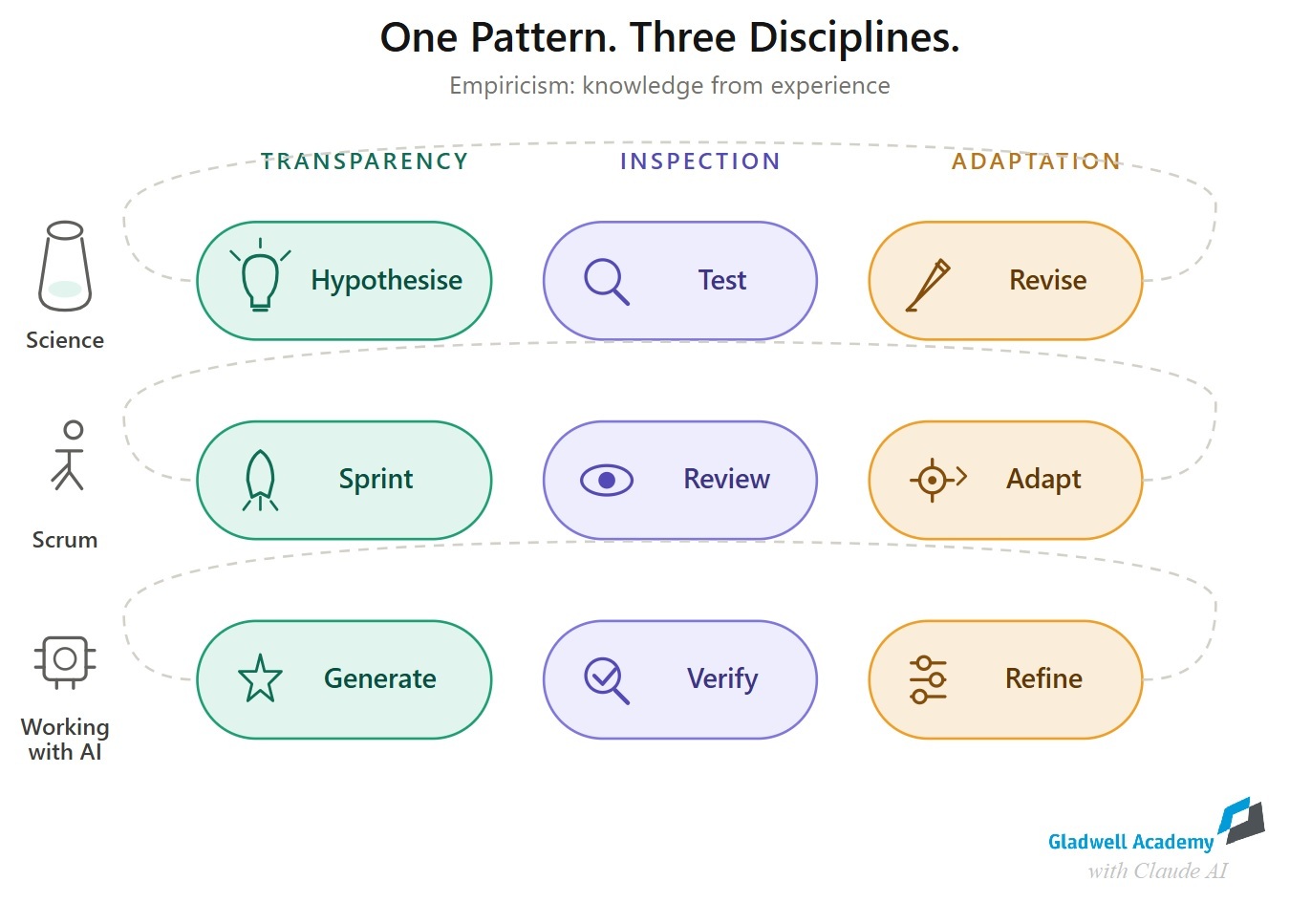

Scrum is explicitly founded on empiricism: the idea that knowledge comes from experience and decisions should be based on what is observed. Its 3 pillars – transparency, inspection, adaptation – are practices for engaging with uncertainty, not eliminating it.

The Agile Manifesto* similarly invites us to welcome changing requirements, not because change is fun, but because the world won't hold still long enough for our plans to remain correct.

In that sense, AI does not undermine agile thinking. It exposes why we need it.

... It forces us to stop conflating confidence with truth.

... It forces us to inspect before we act on an output.

... It forces us to adapt when new evidence appears.

... It forces us to ask the real questions that matter,

not just accept faster answers.

... It forces us to be humble, to be willing to say and to hear: "This is my best current interpretation. Let's inspect it."

Used well, AI doesn't replace critical thinking. It demands it.

Putting the scientific mindset into practice: agile ways of working

Science has never just been about delivering, or – at ist best – approximating, certainty. It's also about disciplined engagement with uncertainty.

This is the same posture that underlies both the scientific method and Scrum's empiricism. A hypothesis is not a claim to truth – it's a structured guess, made explicit so it can be tested. A sprint is not a promise of delivery – it's an experiment, designed to generate learning. Both science and Scrum operate on the same fundamental insight: we cannot know the world by thinking about it. We can only know it by engaging with it, observing what happens, and adjusting our models accordingly.

In 2019, over 800 statisticians signed a statement calling for the abandonment of the term "statistical significance" – not because measurement doesn't matter, but because binary significance thresholds create false certainty. They proposed instead that scientists embrace uncertainty: accept it, be thoughtful, be open, be modest.4 As Ron Wasserstein, executive director of the American Statistical Association, put it: "Uncertainty is present always. That's part of science. So rather than trying to dance around it, we should accept it."

This shift – from demanding certainty to working skilfully with uncertainty – is precisely what both scientific practice and agile practice require in order to effectively navigate our VUCA world.

That same posture is what AI asks of us.

Not blind trust in its outputs. Not cynical dismissal of its usefulness.

But disciplined use – understanding what it's good at, what it's not, and where human judgement must step in.

AI excels at pattern recognition, relationship mapping, surfacing options, and generating hypotheses. It's less reliable when asked to produce unverified specifics – exact figures, precise citations, detailed facts it has no mechanism to check.

In other words: use AI as a thinking partner, not as an unquestioned authority to get unambigious answers. The output is only as good as the clarity of your intent – and the willingness to own what you do with it.

What this means in practice

If AI hallucination is structurally similar to human cognition, then a useful response isn't to demand impossible perfection from either. It's to build better practices around ambiguity:

More transparency: Be explicit about sources, methods, and confidence levels – whether the claim comes from a person or a model.

Faster feedback: Create loops that surface errors early rather than waiting for downstream consequences.

Clearer evidence: Distinguish between outputs that need verification (data points, citations, legal claims) and outputs where the value is in the thinking, not the precision.

Resilience: Cultivate the capacity to encounter wrongness – in AI, in others, in yourself – and emerge stronger. Build the emotional and organisational capacity to absorb setbacks as data, not defeat. True resilience isn't merely bouncing back; it's a transformative journey toward thriving amidst uncertainty.

Diverse thinking: Uncertainty rewards different ways of seeing. Seek out perspectives that don't mirror your own – because uniform thinking shares uniform blind spots. Cognitive diversity isn't a nice-to-have; it's how teams catch what any single way of thinking would miss.

Continuous alignment: Not the ritual of check-ins, but the discipline of ongoing calibration. Test your assumptions. Surface where you see things differently. Build the willingness to move forward together, even through disagreement. A genuine "agree to disagree" is alignment; false consensus is not.

The deeper reframe – What this means for *you*

AI hallucination isn't simply the scandal of a flawed machine. It's a reminder that intelligence – artificial or human – is always operating at the edge of uncertainty.

We are all, in our own ways, making sense of incomplete information fast enough to move. The answer isn't to demand impossible perfection from our tools or ourselves. The answer is to get better at working with ambiguity – at recognising that the map will never fully match the territory, and acting wisely anyway.

That's not just a smarter way to use AI.

It's a smarter way to lead ourselves and organisational change navigating our complex & uncertain world.

And here's what makes this truly challenging: uncertainty doesn't stop at the individual. It ripples through teams, across departments, into entire organisations. Working with ambiguity at this scale requires systems thinking – the ability to see patterns, relationships, and dynamics that no single perspective can capture. Systems coaching equips leaders to cultivate this collective capacity: helping organisations become learning systems that embrace uncertainty rather than brittle structures that fear it.

As your partner in transformation, we're dedicated to guiding organisations toward this resilient and agile future – equipped to navigate whatever challenges may come their way.

*Password: Gladwell

Authored by Dr. Julia Heuritsch, assisted by Claude AI.

References

1 Seth, A. (2017). "Your brain hallucinates your conscious reality." TED Talk. Available at: https://www.ted.com/talks/anil_seth_your_brain_hallucinates_your_conscious_reality. See also: Seth, A.K. (2021). Being You: A New Science of Consciousness. Dutton or TED podcast.

2 Nader, K., Schafe, G.E., & LeDoux, J.E. (2000). "Fear memories require protein synthesis in the amygdala for reconsolidation after retrieval." Nature, 406, 722–726. See also: Lee, J.L.C. (2009). "Reconsolidation: maintaining memory relevance." Trends in Neurosciences, 32(8), 413–420.

3 Loftus, E.F. & Palmer, J.C. (1974). "Reconstruction of automobile destruction: An example of the interaction between language and memory." Journal of Verbal Learning and Verbal Behavior, 13, 585–589. See also: Loftus, E.F. (2005). "Planting misinformation in the human mind: A 30-year investigation of the malleability of memory." Learning & Memory, 12, 361–366.

4 Amrhein, V., Greenland, S., McShane, B., et al. (2019). "Scientists rise up against statistical significance." Nature, 567, 305–307. See also: Wasserstein, R.L., Schirm, A.L., & Lazar, N.A. (2019). "Moving to a World Beyond 'p < 0.05'." The American Statistician, 73(sup1), 1–19.